Wearable computing feels like the modern day Space Race. Every major tech company is investing big in research in an attempt to crank out the “next big (wearable) thing”. As user interface designers we’re excited about the possibility of these gadgets to make life a better place.

Wearable technology comes in two groups:

• The single use case devices.

• The secondary screen devices.

Single-use devices really only aim to solve one need, but may do so in an exceptional way for their time. Current products in this space include Nike Fuelband or the Bluetooth headsets of old.

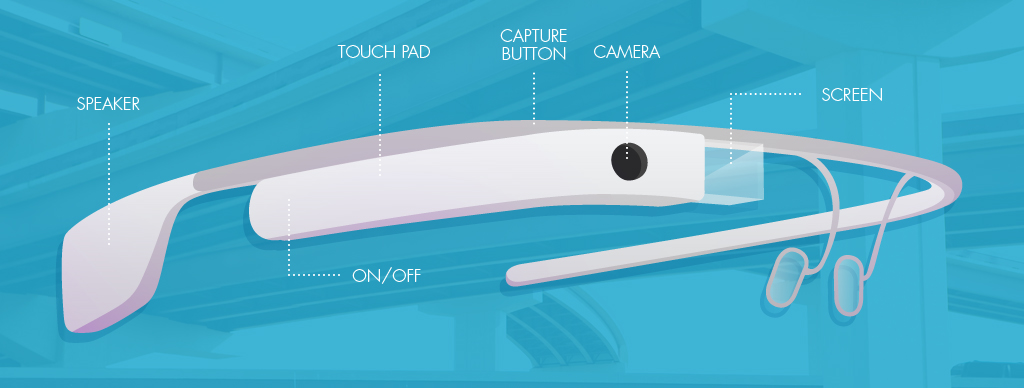

Secondary screen devices focus on multiple functions that often currently exist across your mobile, tablet, or desktop. For example, being able to check your text messages without pulling out your phone. These devices aren’t creating new experiences and are only moving the current experience of your smartphone or tablet to a new location: your wrist or head. Current products in this category include Google Glass or Samsung Gear.

Now here comes the crazy part!

We expect most single use devices to eventually fall by the wayside to be replaced by their more capable and versatile counterparts, the second screen device.

Think of this in terms of your cell phone. Originally you only used a phone to call and had separate devices for your calculator, weather, or music. As the computing power and user interface increased in complexity, the smart phone was born. Today we’re at the convergence of this same hardware and software nexus for wearable computing.

Here is the creative ninja challenge:

While wearable computing hardware is improving at an exponential rate, the user interface and user experience of these tools is moving glacially. Why? What can user interface designers do to fix that?

In the coming weeks, I’ll be exploring what a week with Google Glass looks like, as well as some of my ideas for how to improve the wearable experience. My hope is to try and explore solutions to some of these challenges before they hit the market.

This HTC Vive VR system looks very promising, but the oculus rift is far ahead in terms of games available and compatible movies.